FBI vs Apple: Why giving up security and privacy could hurt us all – StartupSmart

By Suelette Dreyfus and Shanton Chang

“You have nothing to fear if you have nothing to hide” is an argument that is used often in the debate about surveillance.

The latest incarnation of that debate is currently taking place in the case of the US government versus Apple. The concern that technology experts have is not whether you have anything to hide, but whom you might want to hide your information from.

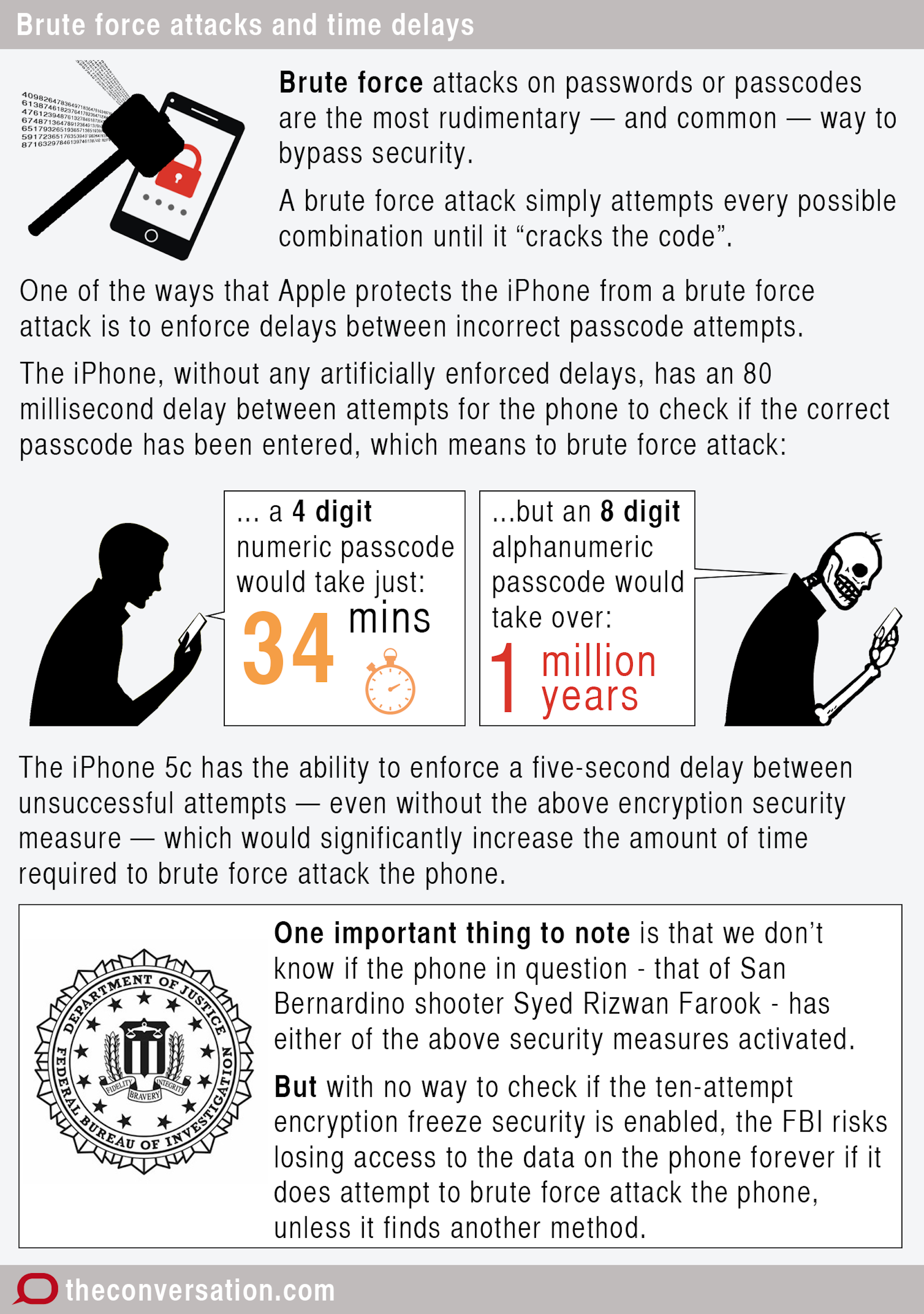

Last week a US court ordered Apple to create a special tool so the FBI could break a security feature on an iPhone 5c. The phone belonged to one of the shooters in an attack resulting in the deaths of 14 people in San Bernardino California in December 2015.

In this case, Apple is fighting the ruling. Apple CEO Tim Cook argues that this is a dangerous precedent, and it is not prudent to build a system with a hacking tool that can be used against Apple’s very own system.

Beyond those concerned with civil liberties, many in the security technology field, including Google and WhatsApp and now Facebook, are just as worried as Cook.

This is because a hole in security is a dangerous thing, despite assurance that only the “good guys” will use it. Once a system is compromised, the hole exists – or, in this case, the master tool that can make it exists. This means others may be able to steal and use such a tool, or copy it.

But how likely is this to happen? If you create a key to unlock something, the question becomes how well you can hide the key? How hard would an adversary – either a state actor or a commercial competitor – try to acquire the key? We expect some would try very hard.

As it is, cybercrime is a non-trivial matter. There is already a “dark market” among hackers and organised crime for unpatched security vulnerabilities. When one includes government intelligence agencies, it’s a sector with a budget of hundreds of millions of dollars.

It’s likely that a vulnerability relating to Apple’s security key used for providing auto-updates to all Apple iOS devices – including iPhones, iPods and iPads – would be among the most attractive targets. The risk to Apple in loss of reputation for an all-pervasive security and privacy flaw would be enormous.

Secure brand

A brand is a kind of promise, and Apple’s brand promises security and privacy. The FBI’s push is not just about bypassing that security; it is a much more aggressive step. Such a step will hurt Apple’s brand.

The cynic could argue that Apple is doing this to ensure trust with customers and maintain profits, and that might well be the case. However, it should not detract from the very real security threat to all its customers.

It also creates a secondary risk that international customers, such as those in Australia, may drop US companies’ products in favour of products made in countries that do not force business to trade away customer privacy.

Apple is reported as saying no other country in the world has asked it to do what the US Department of Justice has.

There is also a bigger picture here. The FBI is trying to shift the frontier of how far governments can force businesses to bow to their will in the name of security. This is quite different from classic government regulation of companies for clean air or water, or to prevent financial chicanery.

It is fundamentally about whether the government can or should compel a business to have to spend its own resources to actively break its own product, thus hurting its own customers and brand.

And there are signs that some in the US government believe they should have this power. In a secret White House meeting late last year, the US National Security Council issued a “decision memo” that included “identifying laws that may need to be changed” in order to develop encryption workarounds.

Even as the White House was claiming it wouldn’t legislate backdoors in encryption, it now seems there was another agenda being enacted that was not immediately visible. The Apple case may be part of a strategy to wedge any company that chooses to put its loyalty to its customers ahead of government dictates.

Certainly the choice of this test case is interesting.

Does the government even need to hack the phone?

Edward Snowden tweeted a summary of facts about the specific case that appeared to undermine the legitimacy of the FBI’s claim that breaking the iPhone is so necessary.

Key revelations were that the FBI already has the suspect’s communications records (as stored by the service provider). It also has backups of all the suspect’s data until just six weeks before the crime. And co-workers’ phones provided records of any contact with the suspect, so it’s possible to know the nature of the shooter’s peer contacts.

Further, he noted, there are other means of accessing the device without demanding Apple break its own product, despite what appears to be a sworn declaration by the FBI to the contrary.

Perhaps most surprising is that the phone in question was reported to be a government-issued work phone, not a “a secret terrorist” device. Such phones already require users to give monitoring permissions.

In other words, there should be a long and fruitful digital trail without Apple’s help. In fact, the hotly contested iPhone is not even the shooter’s personal phone. The shooter destroyed his personal phone and other digital media before he was killed in the police shoot-out.

This highlights that giving up security and privacy on a mass scale may offer little return, as the “villains” have many other ways to hide their transactions.

Therefore, for politicians who seek to politicise this issue, it might be important to understand the security risks and threats here before making pronouncements about the issue. There is certainly more at stake here than just political popularity.

Suelette Dreyfus is a lecturer at the Department of Computing and Information Systems and the University of Melbourne.

Shanton Chang is an associate professor in information systems at the University of Melbourne.

This article was originally published on The Conversation. Read the original article.

Follow StartupSmart on Facebook, Twitter, LinkedIn and SoundCloud.